The Engineering of Command Line Interfaces: A Comprehensive Guide to Modern Design and Implementation

essay

The CLI Is the Last Real Interface

The Command Line Interface has evolved. Hard. What used to be a basic text-based tool for typing commands is now the primary interface for managing cloud infrastructure, developer tools, and automated systems. In 2025, the terminal is in a full renaissance. While graphical interfaces keep getting more abstracted and dumbed down, the CLI remains the most direct connection between an engineer and the kernel.

This isn't casual scripting anymore. This is sophisticated software product engineering. DevOps, Data Science, Platform Engineering. All of them demand CLIs that are high-performance products, not afterthoughts. They need strict usability standards and seamless machine interoperability. The CLIG manifesto nails it: CLIs should be scriptable, but they must prioritize human usability first. That tension creates real engineering challenges. Your tool has to deliver rich, interactive feedback to humans while also outputting clean structured data for machines.

This is a comprehensive breakdown of where CLI engineering stands right now. Architectural patterns, language-specific ecosystems, advanced UI paradigms. Everything you need to build world-class command-line tools. We'll look at how modern languages use type safety, concurrency, and single-binary distribution to solve problems that plagued developers for decades. And we'll examine why Developer Experience (DX) is now the real measure of whether your CLI succeeds or dies.

Architectural Foundations

Before you touch a specific language, you need to understand the universal principles that make CLI tools work. These principles come from the Unix philosophy of composition and modularity. They've evolved, but the core logic still holds.

Streams and Composition

The core mechanism of any CLI is the standard stream protocol. Separating data from diagnostics is critical for making tools composable in a pipeline.

Standard Output (stdout) vs. Standard Error (stderr)

If you want professional tools, you need strict stream separation. The stdout stream is for user-requested data only. If the user asks for a list of active pods or a file checksum, stdout has that and nothing else. Log entries, warnings, progress indicators? All of that goes to stderr.

This guarantees that output from one command can be reliably piped to another without parsing errors. If your tool prints "Fetching data..." to stdout before a JSON object, a downstream parser like jq will break. Current best practice says even success messages belong in stderr if the tool's primary purpose is data output.

Exit Codes as Control Signals

Exit codes are how a CLI talks to the operating system and calling scripts. 0 means success. Everything else requires careful thought.

Binary Success/Failure: For many applications, a simple distinction (0 for success and 1 for failure) is sufficient and recommended to maintain clarity.

Semantic Error Codes: In complex systems, distinct exit codes enable parent processes to respond appropriately. For example, sysexits.h defines standard codes such as EX_USAGE (64) for usage errors and EX_CONFIG (78) for configuration issues.

Reserved Ranges: Developers should avoid using exit codes 126 (command cannot execute), 127 (command not found), and 128+ (signal termination) since these are reserved by the shell.

The Grammar of Commands

How users construct commands matters more than most developers think. The industry has settled on a standard syntax: program [global options] command [subcommand] [command options] [arguments].

Subcommands and the Multicall Binary

The multicall binary pattern, used by Git and Docker, packs related functions into a single executable. This keeps the system PATH clean and lets you share configs and auth across commands. One rule: be consistent. If one subcommand requires a confirmation flag, they all should.

Flags vs. Arguments

A classic design decision: positional arguments or flags? Best practice says positional arguments are for the command's primary object. A filename in rm filename. Everything else should be a flag. This reduces cognitive load. Users don't have to memorize the order of five different arguments.

Configuration Hierarchies

A solid CLI pulls configuration from multiple sources and merges them into one runtime state. This layering gives you flexibility across local dev, CI/CD, and production.

Priority from highest to lowest:

- Flags - Explicit CLI arguments (e.g.,

--port 8080). For overriding defaults on specific executions. - Environment Variables - System vars (e.g.,

APP_PORT=8080). For containerized environments and 12-factor apps. - Local Config - File in CWD (e.g.,

.myapprc). For project-specific settings. - Global Config - File in home dir (e.g.,

~/.config/myapp/config.yaml). For user-specific preferences. - Defaults - Hardcoded values. Fallback behavior.

This precedence model lets users set global defaults while easily overriding them per project or per command.

The Rust Ecosystem: Performance and Correctness

As of 2025, Rust is the top language for high-performance, system-level CLI tools. Zero-cost abstractions, no garbage collection (fast startup times), and strict type safety make it the natural replacement for legacy Unix tools.

Argument Parsing with Clap

The clap crate is the dominant library in the Rust ecosystem. Two APIs, two different engineering needs: the Derive API and the Builder API.

The Derive API: Type-Safe Definition

This is the preferred method for most applications. It uses Rust's procedural macros to derive parsing logic from struct definitions. Your CLI interface stays in sync with your internal data structures by definition.

#[derive(Parser)]

#[command(name = "archiver", version = "2.0")]

struct Cli {

/// Sets a custom config file

#[arg(short, long, value_name = "FILE")]

config: Option<PathBuf>,

#[command(subcommand)]

command: Commands,

}

This declarative style separates interface definitions from execution logic. Clap handles parsing, validation, and help generation automatically, including colored help text. The value_parser feature converts string arguments into complex Rust types with built-in error checking.

The Builder API: Dynamic Construction

The Derive API is clean and simple. But when your argument structure can't be known at compile time, like tools that load plugins, you need the Builder API. It gives you imperative parser construction with maximum flexibility. You pay for it in verbosity.

Error Handling: Miette and Color-Eyre

Rust's Result type forces you to think about failure states. Good. But unwrapping errors or dumping raw stack traces on users? That's bad DX.

The ecosystem has moved toward treating errors as UI components:

- Miette: Diagnostic errors with rendered code snippets, highlighted error locations, and suggestions for fixes.

- Color-Eyre: Panic handlers that capture screen state and provide user-friendly reports, often linking to GitHub issues. Essential for beta software.

Best practice: use thiserror for library code (structured errors) and miette or anyhow in the main binary to format those errors for display.

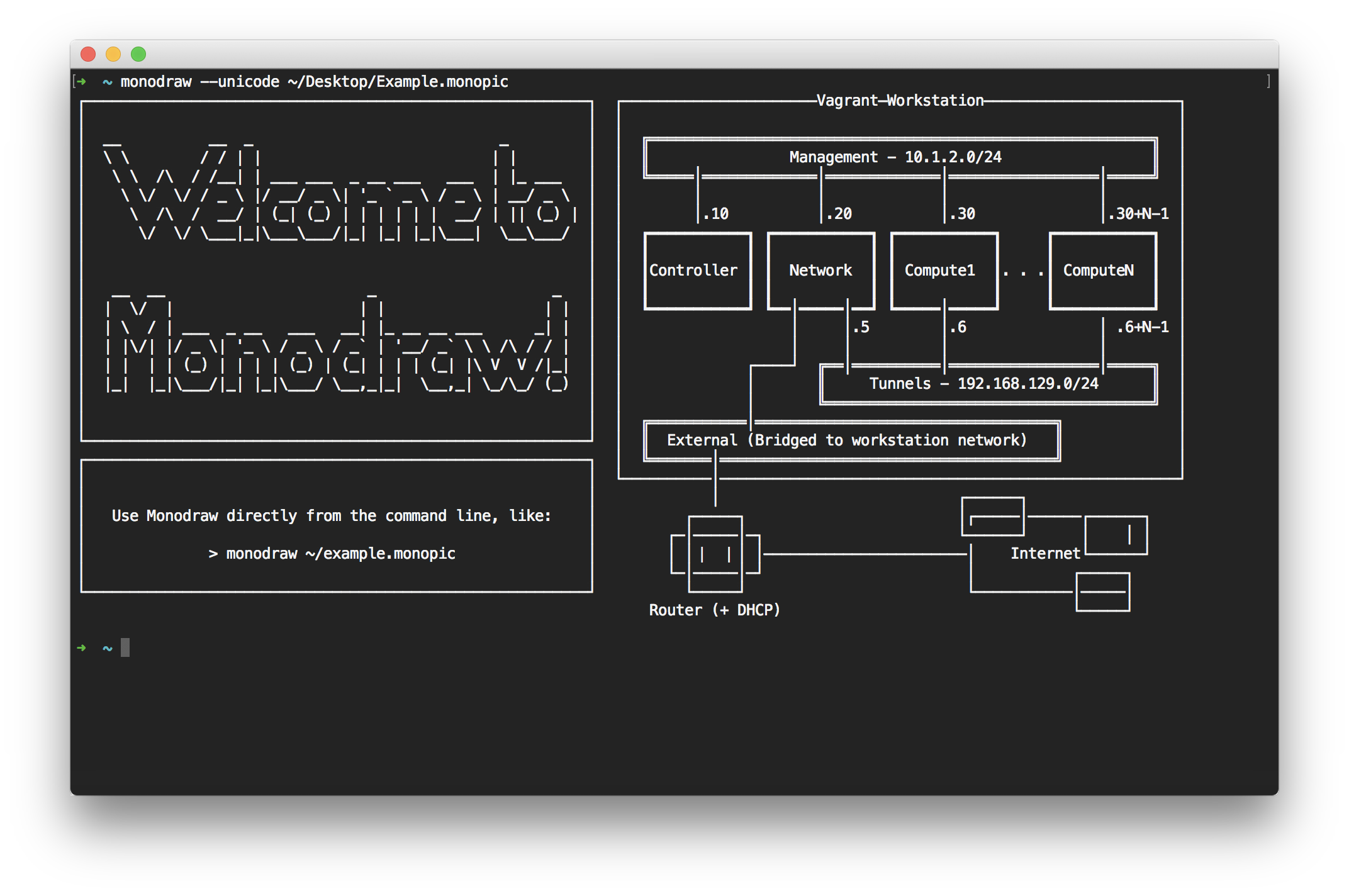

TUI with Ratatui

When your CLI needs more than basic I/O, you reach for ratatui, a fork of tui-rs. It uses an immediate-mode rendering model for high-performance UI rendering. You manage the render cycles yourself. It abstracts raw terminal escape codes but keeps you in control. For simpler interactive inputs, dialoguer or inquire get the job done with less overhead.

Distribution: Cargo-Dist

Distribution has always been a pain point in CLI engineering. Rust's cargo-dist fixes this by automating build and release pipelines. It integrates with GitHub Actions to cross-compile binaries for major platforms, generate installers, and create GitHub Releases. It solves the "last mile" problem: getting your Rust CLI to users who don't have the Rust toolchain installed.

The Go Ecosystem: The DevOps Standard

Go is the foundation of cloud-native infrastructure. Kubernetes, Docker, Terraform. All Go. The language prioritizes stability, fast compilation, and concurrency.

The Cobra/Viper Stack

The combination of cobra (command structure) and viper (configuration management) is the de facto standard for Go CLIs.

Cobra: Structural Command Patterns

Cobra gives you the framework for command/subcommand structure. It promotes a disciplined project layout where each command gets its own struct and file, typically under a cmd/ directory. It auto-generates shell completion scripts and man pages, keeping docs in sync with code.

Viper: Configuration Management

Viper handles configuration precedence but requires explicit flag binding. Unlike Rust's clap where struct hydration is automatic, Viper makes you wire things up manually.

// Best Practice: Explicit Binding

rootCmd.PersistentFlags().String("port", "8080", "Port to listen on")

viper.BindPFlag("port", rootCmd.PersistentFlags().Lookup("port"))

This explicit binding links CLI flags to the config registry and enables the "Flag > Env > Config" precedence hierarchy.

Project Layout

The "Standard Go Project Layout" is widely adopted. Strict separation of concerns is non-negotiable for large CLI codebases:

- cmd/appname/main.go: The entry point. Keep it minimal. Wire dependencies and call

rootCmd.Execute(). - internal/: Core application logic. Protected from external access.

- pkg/: Library code intended for sharing. Useful when the CLI wraps an API client.

The Elm Architecture in Go: Bubble Tea

Go used to lack a real TUI framework. Bubble Tea changed that. It implements The Elm Architecture (Model-View-Update), bringing a purely functional approach to terminal UIs:

- Model: A struct holding application state.

- View: A function that renders state to a string.

- Update: A function that handles events and returns new state.

This architecture separates state management from rendering. Complex interactive tools become more deterministic and testable.

The Python Ecosystem: Data Science and Rapid Prototyping

Python dominates data science, machine learning, and system scripting. Historically, Python CLIs used argparse or click. By 2025, the ecosystem has moved to Typer, which uses modern type hinting to kill boilerplate.

Typer: Type Hints as Configuration

Typer is built on top of Click. It uses Python 3.6+ type hints to define the CLI interface.

import typer

from typing import Optional

def main(name: str, retries: int = 3, verbose: bool = False):

"""

Say hello to NAME with configurable retries.

"""

if verbose:

typer.echo(f"Running with {retries} retries")

Typer introspects function signatures to generate the parser. Parameters with default values become options automatically. Write Once philosophy: your implementation logic is your interface definition.

Rich: Redefining Terminal Output

Rich set a new standard for what Python CLIs can look like. Rich text parsing, Markdown rendering, syntax highlighting, advanced tables.

- Traceback Handler: Clarifies error messages with syntax-highlighted user code and readable variable displays.

- Async Integration: Rich components like progress bars work with asyncio for modern, non-blocking CLI applications.

Configuration: Pydantic Settings

Using Pydantic for configuration management is best practice. The BaseSettings class reads from environment variables automatically with type validation built in.

Distribution: The Rise of Uv

Distribution used to be Python's biggest headache. In 2025, uv from Astral changed the game. It's a fast package manager that handles Python versions and tools. Run uv tool install my-cli and it creates isolated environments with exposed binaries. Distribution solved.

The Node.js Ecosystem: Web-Native Tooling

Node.js is where frontend tooling lives. Its ecosystem lets you build CLIs that feel natural to web developers, using JSON and hooking into npm/yarn workflows.

Frameworks: Commander vs. Oclif

- Commander.js: The veteran library. Ideal for smaller utilities with a fluent API for command definitions. Lightweight but less structured.

- Oclif: Built by Heroku and Salesforce. Designed for complex CLI suites with strict directory structure, plugin support, and automated documentation.

React in the Terminal: Ink

Ink brings React's component model into the terminal. Build UIs with React components and hooks, rendered through a custom terminal renderer. If you know React, you already know how to build terminal UIs.

Single Executable Applications (SEA)

This is a big deal for Node.js. Historically, distributing Node apps meant the user needed Node installed. New tooling and experimental features now let engineers inject a script into a blob and bundle it with the

Node binary itself. You get a standalone executable that runs on systems without Node.js installed. This closes the portability gap with Go and Rust. No more "install Node first" as a prerequisite for your CLI tool.